The Hidden Truth of the Central Limit Theorem

It’s About Means, Not Samples

You might have come across multiple posts on the Central Limit Theorem (CLT). I want to clarify a few aspects of it.

At its core, the theorem is fundamentally about the distribution of the mean (or average) of samples, not the distribution of individual samples.

This focus on sample means is what makes the theorem so powerful.

To see this in action, you can try a simple simulation:

1. Take K samples, each of size N, from any population.

2. Compute the mean of each sample.

3. Plot the histogram of these sample means.

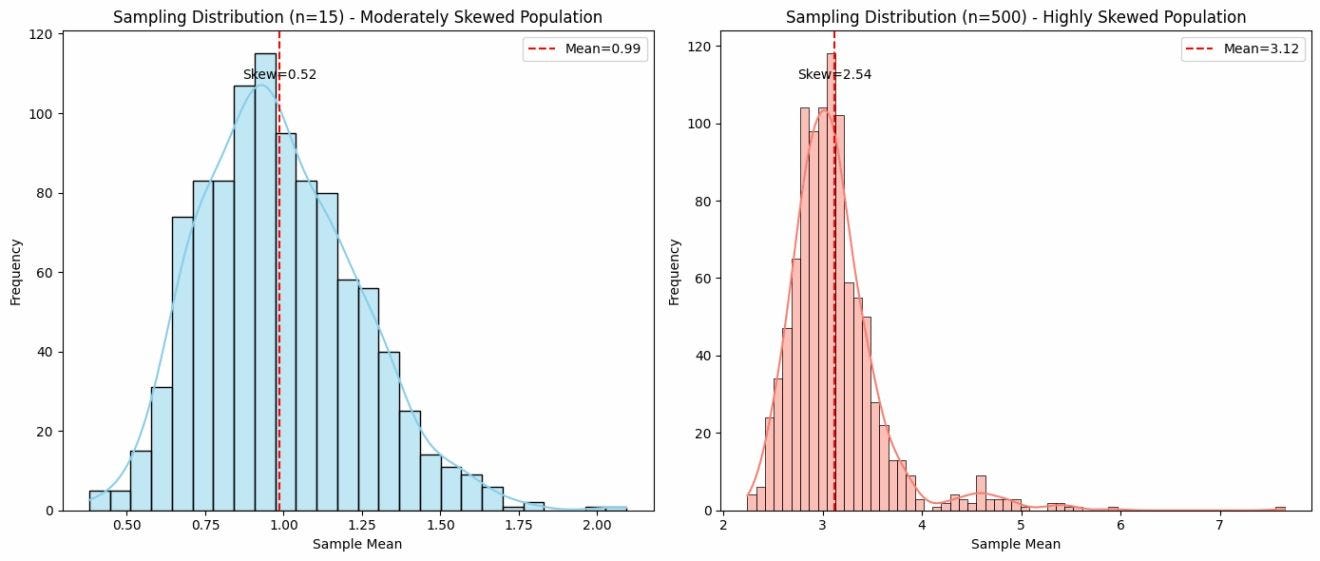

When you do this, you’ll notice that the resulting distribution tends to look normal, even if the original population distribution is far from normal.

This naturally leads to a common misconception that the theorem specifies a required sample size.

In practice, people often say “sample size greater than 30 is sufficient,” but this is only a rule of thumb, NOT the requirement.

Sometimes, even smaller sample sizes produce an approximately normal distribution of means, while other times, even large sample sizes don’t, especially for distributions with extreme skewness or heavy tails.

A good example is the Cauchy distribution, where the sample means do not converge to a normal distribution, no matter how large your samples are.

For demonstration purposes, you can see the distribution of means with sample size = 15 vs sample size = 500.

In summary, the CLT is about the behavior of sample means, and the N > 30 guideline is just a practical approach, not a strict requirement.